“Can you recommend a book for…?”

“What are you reading right now?”

“What are your favorite books?”

I get asked those types of questions a lot and, as an avid reader and all-around bibliophile, I’m always happy to oblige.

I also like to encourage people to read as much as possible because knowledge benefits you much like compound interest. The more you learn, the more you know; the more you know, the more you can do; the more you can do, the more opportunities you have to succeed.

On the flip side, I also believe there’s little hope for people who aren’t perpetual learners. Life is overwhelmingly complex and chaotic, and it slowly suffocates and devours the lazy and ignorant.

So, if you’re a bookworm on the lookout for good reads, or if you’d like to get into the habit of reading, this book club for you.

The idea here is simple: Every week, I’ll share a book that I’ve particularly liked, why I liked it, and several of my key takeaways from it.

I’ll also keep things short and sweet so you can quickly decide whether the book is likely to be up your alley or not.

If you’ve already read a book that I recommend or have a recommendation of your own to share, don’t be shy! Drop a comment down below and let me–and the rest of us “book clubbers”–know!

Lastly, if you want to be notified when new recommendations go live, hop on my email list and you’ll get each new installment delivered directly to your inbox.

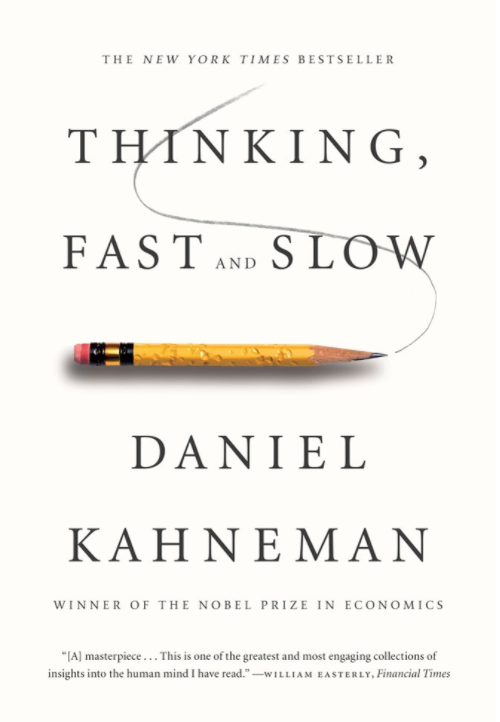

Okay, let’s get to this week’s book: Thinking, Fast and Slow by Daniel Kahneman, who’s a Nobel prize-winning psychologist and economist, and professor emeritus at Princeton University.

Every month or so I like to read a book that I hope will just make me a little smarter.

Well, this is one of the better “make me smarter” books that I’ve read in a long time. It’s chock-full of simple but profound psychological, behavioral, and economic insights that will make you a better thinker and decision maker and help you avoid some of the more common cognitive pitfalls that lead people astray in their lives, and the arguments and examples presented in the book are exceptionally clear and orderly, which gives you a firsthand look at how a brilliant mind works—an ideal to strive toward in your own reasoning and analysis.

This book is also a good read for anyone interested in becoming a better marketer, because great marketing ideas ultimately come from a deep understanding of human psychology and persuasion, from creative translations of observations about how people think and behave into commercial applications.

One of the things that most struck me while reading this book is just how easily we can be manipulated—and just how much of our personalities and lives can run on automatic—if we don’t make a conscious effort to at least consider zigging when something in us really wants to zag.

This is particularly relevant in today’s political and social climates. The propaganda machines of every side of every debate are in overdrive, and are more sophisticated than ever, but they still rely for their power on the exploitation of many of the thinking traps and cognitive biases discussed in this book. Simply reading about these inborn deficiencies doesn’t necessarily cure us of them, but hey, maybe they’ll help us see through some of the daily dose of demagoguery and agitprop.

Anyway, the bottom line is if you like to learn about what goes on behind the curtains of our minds and how it influences our attitudes, emotions, choices, and behaviors, then this book is for you.

Want to listen to more stuff like this? Check out my podcast!

My 5 Key Takeaways from Thinking, Fast and Slow

1

Reciprocal priming effects tend to produce a coherent reaction: if you were primed to think of old age, you would tend to act old, and acting old would reinforce the thought of old age.

My Note

Consciously controlling your thoughts and self-talk is one of the easiest ways to control your attitudes, feelings, behaviors, and even your capacity for mental and physical performance. For example, studies show that positive self-talk can increase our motivation and willingness to endure uncomfortable situations, so if you tell yourself a situation is awful and unbearable, it will feel more so, whereas telling yourself you’re separate to the pain and discomfort and are going to be okay will help you fight through it.

2

This is just what you would expect if the confidence that people experience is determined by the coherence of the story they manage to construct from available information. It is the consistency of the information that matters for a good story, not its completeness. Indeed, you will often find that knowing little makes it easier to fit everything you know into a coherent pattern.

My Note

This helps explain why some people can be so confident about things which they know so little about, and why the most ignorant people are often the most certain of their positions and beliefs.

Take government and politics, for example. These days, just about everyone has a number of hardline opinions on all manner of things ranging from Trump to the electoral process, immigration, social welfare, and so on. I’ve spoken with many people about these things and have been amazed at how simultaneously certain and ignorant they were of what is and should be.

If you want to see this for yourself, the next time someone says we’re now under fascist rule, ask them to define the word fascism, and wait for the burbling. “It’s like…Nazis and Hitler and stuff!” And then for bonus babbling, ask them what “democracy” and “republic” mean. “Uhhh, it’s like, government by the people for the people!” Oh, you don’t like the electoral college? What is it? How does it work? “Durrrrrr, it doesn’t matter because it’s WAYCIST!” The next time someone voices any opinion of the economy, ask them to define the word economics and explain how the economic machine works, and wait for the crickets.

Again, I’ve done this many times just to demonstrate to people how absurd it is to get worked up about vast, complex phenomena when they don’t even understand the topics being discussed.

3

The affect heuristic is an instance of substitution, in which the answer to an easy question (How do I feel about it?) serves as an answer to a much harder question (What do I think about it?).

“The emotional tail wags the rational dog.” The affect heuristic simplifies our lives by creating a world that is much tidier than reality. Good technologies have few costs in the imaginary world we inhabit, bad technologies have no benefits, and all decisions are easy. In the real world, of course, we often face painful tradeoffs between benefits and costs.

His work offers a picture of Mr. and Ms. Citizen that is far from flattering: guided by emotion rather than by reason, easily swayed by trivial details, and inadequately sensitive to differences between low and negligibly low probabilities.

My Note

I try to avoid binary thinking as much as possible, and especially in situations where the stakes are high. It’s basically never true that someone or something is wholly right or wrong, and if we want to make the best possible analyses and decisions, we have to be able to understand and weigh the nuances and be able to demonstrate why someone or something is more right than wrong, or vice versa, or even mostly right or wrong.

4

Amos and I coined the term planning fallacy to describe plans and forecasts that are unrealistically close to best-case scenarios could be improved by consulting the statistics of similar cases.

My Note

As Daniel says in the book, “Most of us view the world as more benign than it really is, our own attributes as more favorable than they truly are, and the goals we adopt as more achievable than they are likely to be. We also tend to exaggerate our ability to forecast the future, which fosters optimistic overconfidence.”

A great way to avoid this in your own planning is to conduct a “post-mortem analysis” before getting started, wherein you imagine your plan and initiative has completely backfired and failed, and then reflect on how. Why did it all fall apart? What went awry? Were there fatal flaws from the start, or did other unforeseen factors bring it down? If nothing else, this exercise will help you highlight the most obvious ways your plans can fail and the most obvious mistakes you can make, which you can then incorporate into your planning accordingly.

5

The tendency to revise the history of one’s beliefs in light of what actually happened produces a robust cognitive illusion. Hindsight bias has pernicious effects on the evaluations of decision makers. It leads observers to assess the quality of a decision not by whether the process was sound but by whether its outcome was good or bad.

My Note

Anyone that has played sports at least semi-competitively knows that judging yourself by how well you executed the mechanics of playing the game is more fruitful than the outcomes of individual practices and games, because the better you are at the processes, the more good outcomes you’ll naturally experience.

I think the same is true in life. If you want more good outcomes in life, then you want to make more good decisions and take more good actions—decisions and actions that are good on their own merits. Does that mean every outcome will be good, even when decisions and actions are optimal? Of course not. You can never completely eliminate chance and risk and will inevitably experience negative outcomes despite having done “everything right,” but the more you do everything right, the more you can tip the odds in your favor and rest easy knowing that occasional bad outcomes are due more to bad luck than anything else, and that the law of large numbers will reward you over the long term.

Similarly, it’s a mistake to assume decisions and actions were sound because an outcome was good. In reality, the decisions and actions may have been horribly misguided and extremely likely to fail, and the positive outcome may have been due to dumb luck more than anything else.